5 Security Concerns When Using Static Site Generators

Static == Secure Right?

If you’ve read my recent post extolling the virtues of static site generators for secure web development you might think that deploying a static site makes you more or less invulnerable to cyber adversaries. Although it’s true that going static dramatically reduces your vulnerability profile, it doesn’t quite eliminate it.

This post considers five possible attack vectors against websites built with static generators (e.g. Jekyl, Hugo, etc.) and how to best protect yourself against them.

1) Vulnerable Hosts

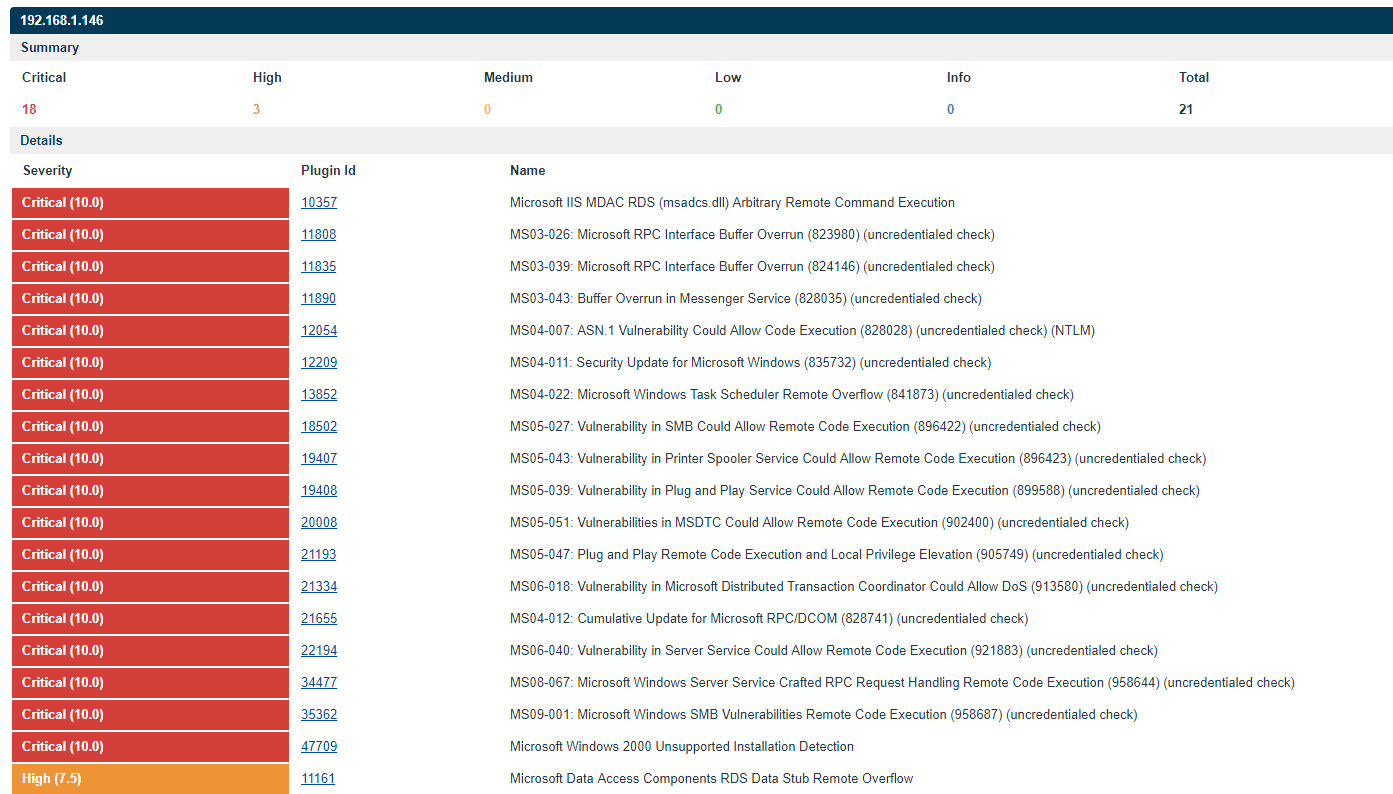

One of the chief benefits of static sites is that they open up a wide range of non-traditional and serverless hosting options. That said, many reasonable people might prefer to self-host their personal website on a home server or cloud platform. Hosting a static site may create a false sense of security and encourage the owner to overlook systems administration tasks.

When hosting a static site with a full-fledged web server like Nginx or Apache, the overall security state of the web server itself remains important. Server-level vulnerabilities like directory traversal exploits or exploits which rely on malformed HTTP requests can still provide hackers a way to deface or maliciously modify your website.

Don’t think your boring database-free site isn’t an attractive target either. A popular static website with links can be a powerful tool in distributing malware, engaging in blackhat SEO, or building a botnet.

How to Fix

-

Keep it simple.

Every plugin/add-on/extension/package that integrates with your webserver is another potential attack surface or vulnerability multiplier.

-

Isolate.

Consider containerizing your static application with [Docker](https://www.docker.com/) to isolate it from the underlying host (where other, more sensitive and important data might be stored). Any minor performance difference from containerization will be invisible at the loads generated by static hosting. -

Patch.

Keep your server patched and up to date. Monitor the [National Vulnerability Database](https://nvd.nist.gov/) and pay attention to any security updates released by your server's developers. -

Be selective.

Choose an actively supported web server with a wide user-base focused on security. Your chances of falling victim to the sort of basic routing vulnerability most likely to impact static sites goes way up if your site depends on that github project with a total of 3 commits from 2008.

2) .git Leakage

Where’d you put the git repository for your static site? Is that url a publicly routable directory? Many tutorials encourage the creation of a .git directory inside the folder generated by your site for easy deployment.

You may think your .git folder doesn’t have any secrets, after all - it’s just the code for a publicly available site right? But an adversary can use the repository to view orphaned pages and files that haven’t been linked yet, read drafts and unpublished stories, or even flip through your site’s history and read content you’ve elected to delete for some reason or another.

Additionally, due to the ability for .git repositories to grow quite large over time, leaking your .git repository can turn into a significant force multiplier for denial of service attacks (see #3 below).

How to Fix

-

Minimize Deployments

Manually or programmatically review the static folder built by your site generator to ensure that only those files you want your end user viewing are included in the final project. Not only does this save you from information leakage issues, it also helps keep your site small, cheap, and nimble. -

Blacklist/Whitelist urls

If you're using a full-fledged server to host your static site, consider taking advantage of any url modification features the server offers to limit your users' GET requests only to those folders which they should be able to view. -

Relocate the Repo

Refactor your repository so the .git folder is no longer located in a folder that is made publicly routable by your site generator. If this is not possible, write a script to automatically delete or exclude the .git folder as part of your deployment process.

3) Denial of Service

A denial of service attack against a static website is much more difficult to execute than against a dynamic website. Static requests are far easier to handle than dynamic requests that require computational operations serverside.

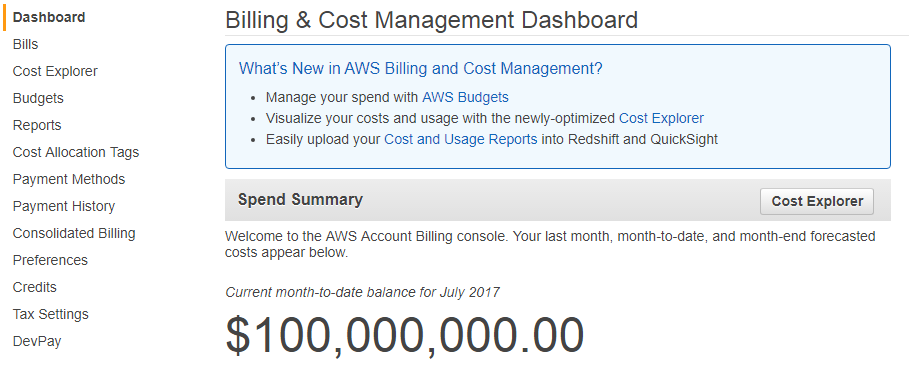

That said, some of the most attractive serverless hosting options for static sites, such as Amazon’s S3 cloud storage option incur per-request charges and might create some unexpected financial charges at volume.

Imagine, for example, a very humble 50 Megabit/s DOS attack against your static site. All the attack does is sends GET requests to a static page hosting a 1kb file. Every hour your S3 bucket gets hit with 22.5 million requests. Running those numbers through the AWS Cost Calculator shows that this attack would cost you around $215 per day.

This sort of attack is a drop in the bucket from Amazon’s perspective and could go unnoticed and unmitigated for several hours. Even if it was noticed, an ornery adversary who just personally dislikes you could always run a rate-limited attack at a reasonable 1000 requests per minute and still up your hosting costs to nearly $1,000 per month.

A static site gives you few self-hosted mechanisms for limiting attacks. You can’t automatically block problematic IPs or suspicious clients. You can’t even set internal server limits to cause the server to stop responding to requests after a certain traffic threshold.

The scalability and affordability of static sites looks great at first pass. After all, serving an ambitious one-million legitimate requests out of an Amazon S3 bucket would only cost you $0.34 a month! Far cheaper than any dynamic hosting option.

However, if you approach static site ownership with a feeling of invulnerability you might quickly find yourself in an expensive and unfortunate situation. DOS attacks are a fundamental characteristic of the modern web and, although all-static gives you a thicker layer of armor, it doesn’t make you invincible.

How to Fix

-

Know your acceptable costs

How much is this site worth to you? How much are willing to pay for a spike in traffic? If you get featured on CNN or some other major site - do you want to continue serving your customers or go silent at the moment when your audience is the largest? Having answers to these questions is a critical component of deciding how and when you’ll respond to traffic spikes

-

Monitor your bills

AWS makes it dangerously easy to just ‘serve-and-forget.’ Bills are automagically paid each month from your credit card, emails are easy to ignore, and costs are hard to estimate or consolidate. Other hosting providers have the same issues to greater and lesser degrees. Know how much your site should be costing you and keep an eye on outliers.

-

Set up a kill switch (or at least Alerts)

Many hosting providers will help you configure a kill switch that lets you take your site offline once you reach a certain bandwidth or cost threshold. Others (hint: AWS) will happily take your money for as long as they can. Pretty much every reputable host offers billing alerts that will send you an email when you reach pre-configurable cost thresholds. Be sure to pay attention to these emails or configure switches to cap your DDOS risk.

-

Use DDOS protection

Depending on your hosting situation, a DDOS protection service may be a viable option for your deployment. Dozens of articles could be written comparing such products but a popular freemium option is cloudflare.

4) External Injections

As your static site evolves, you may wish to pull in content from around the web in the form of links, images, even embedded javascript or html media viewers. This sort of content helps limit the ‘wall of text’ feel a long static article can instill (ahem) and makes a static site feel richer and more modern.

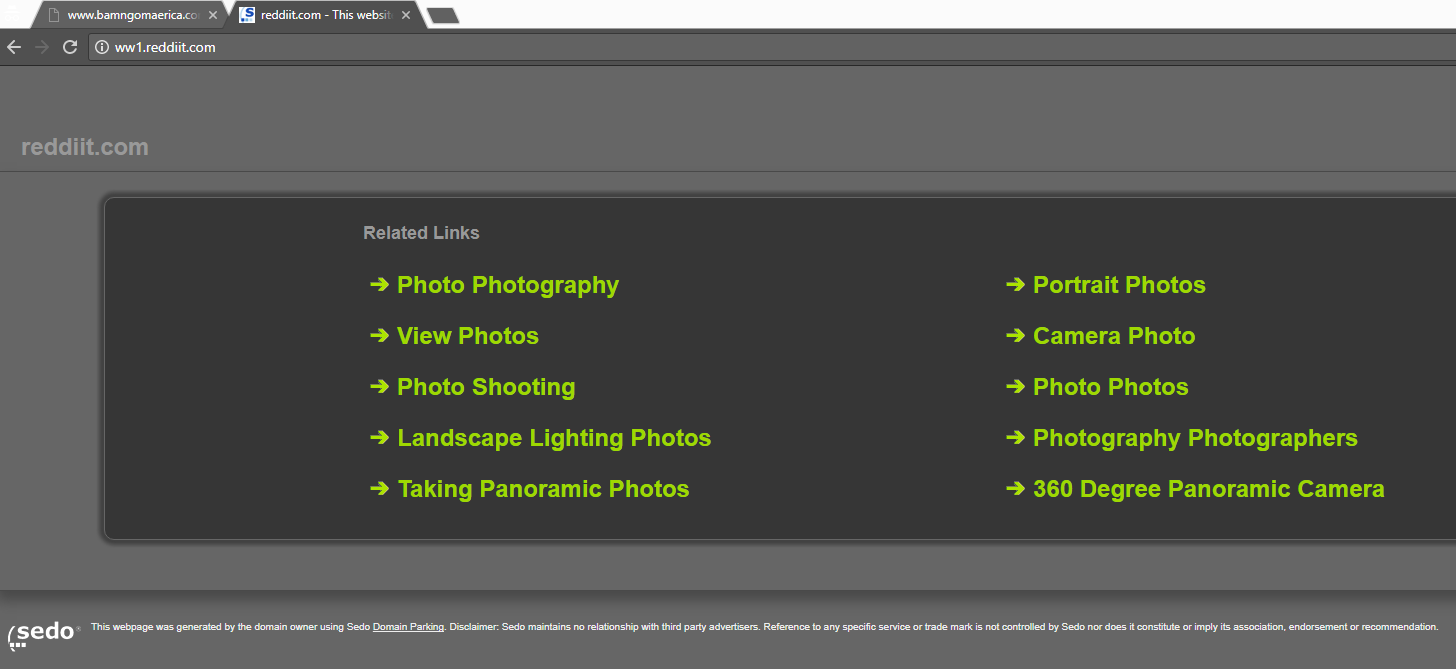

Unfortunately with new features comes new responsibility. A trustworthy link today can become a parked domain or malware host tomorrow. As the maintainer of a public site on the internet, you have entered into a sort of social contract to protect your users to the best of your ability yet weblinks and included files outside your control could quickly wreak havoc.

For example, Mario Henderich in 2011 demonstrated that .svg images could be used as a tool for cross-site scripting in some vulnerable browsers. If your site included an image like:<img src='https://evilsite.org/trustme.svg'> and evilsite.org turned out to not be as trustworthy as you initially hoped (!!!), you might find yourself inadvertently serving unseemly advertisements or even malware on your static site.

How to Fix

-

Go it alone

If you can afford to self host media - do so. It’ll make your site snappier and limit your dependency on others. Not only does this keep your users safe, it also means that when those (lesser) sites go out of business and your great site is the last piece of the internet still standing, you won’t have a bunch of unseemly dead images and 404 errors cluttering up your page.

-

Trust only the trustworthy

Sometimes you can’t host content. Maybe you only have so much bandwidth. Maybe you want to keep a JavaScript file up to date and snappy by using a CDN. Maybe you’re just lazy and don’t like copying media files unnecessarily. In these cases try to stick to well-known hosts with a good reputation. Don’t go with the first image result on google images for a company logo for example, instead try and link to the officially hosted logo on the company’s website. If you use a CDN try to use a reputable one like Google’s rather than the first one that pops up in an online tutorial.

-

Maintain your site

One of the appeals of static sites is that you can just ‘post and forget.’ With no comments to moderate (absent an external tool like Disqus), no user input to deal with, and no changes to keep track of, a static page is about as close to an internet monument as one can get. Unfortunately, while the page may stay the same, the internet around it will change. Take some time every few weeks to go through the links and external content on your site and check that they all go to live pages with the appropriate content. You can also use automated tools like Google Webmaster Tools, the W3C Link Checker, and the immortal Xenu.

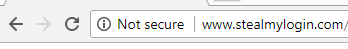

5) Unencrypted Traffic

This issue might seem a little odd. After all, static sites should not handle secret information - whether through AJAX API calls, or direct hosting, or pretty much anything else. If all of the information served by your site is public, why does it matter if the traffic is encrypted?

As I mentioned above, there exists an implicit social contract between a responsible web host and their end user. As a host, you have a moral obligation to protect the user from cyber threats to the best of your ability when they are viewing your page.

TLS does more than just conceal POST parameters sent to webforms. It functions as a critical protection of user privacy on the internet, allowing them to browse the web without the fear of eavesdroppers building a deep profile on the articles they read, the pages they visit, and the content they consume. Certainly some of the routing meta-data from your site will inevitably be available to ISPs and eavesdroppers, but encrypting whatever traffic you can is a common courtesy to your users and rarely takes more than a little effort and expense on your part.

Ideally, you can get your site registered with a legitimate root certificate authority and offer your own SSL certificates to users. This adds an additional layer of protection by providing non-repudiable guarantees that it is you serving the content to your users.

How to Fix

-

Use TLSv1.2+ where your host allows it

These days, deploying some implementation of TLS is almost drop-in effortless for most hosting providers. If it is at all feasible to limit your site to https:// traffic, consider doing so to protect user privacy and provide some identity guarantees from your web server.

Recap

Security is not just for dynamic applications. When building a static site, remember to think about the content you’re hosting, the implicit promises you’re making to users, and the platform on which your site lives.

Static site generators promise to simplify the process of maintaining a secure web presence but don’t let simplicity lead to complacency. Keep abreast of security issues in the technologies you use and consider these five basic examples as a starting point for a lifetime of #staticVigilance.